Graphics Cards,The Gamers' Best Friend, May Succeed In Simplifying The Solution Of Complex Equations

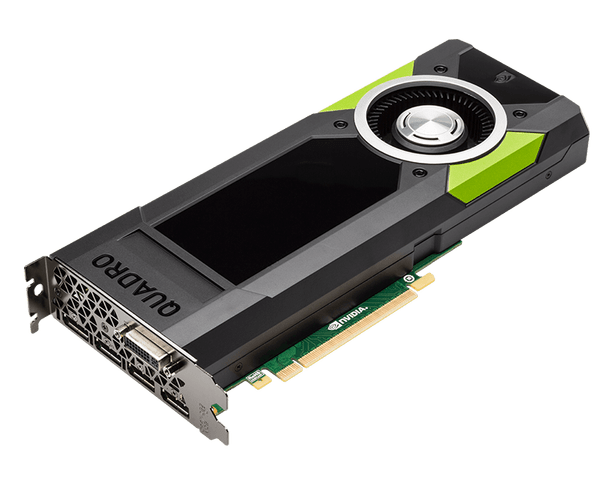

Does your brain stimulate in the name of high-end graphics games? Are you critical about choosing the best video expansion card or gaming laptop to spend hours in playing AC unity, Skyrim, FC 3, Crysis 3, GTA and COD series? Then probably you will be amazed to know that the power running behind your games, which means your geeky graphics card, is a tool for people to run complex mathematical computation. Just killed your interest? Well if we hypothetically believe in the Scientists Are Set To Uncover New Secrets Of The 'Mathematical Universe', then graphics cards definitely justify the conjunction point where gaming converges at one end to emerge as mathematics.

Fair enough? Now putting the philosophy aside, a group of researchers from the KAUST, Extreme Computing Research Center has literally used these graphics cards to solve the kinds of simultaneous equations involving countless variables. According to Professor David Keyes, from the dept. of Applied Mathematics and Computational Science, such compound occurrences appear in statistics, optimization, electrostatics, chemistry, and astronomical calculation where scientists depend on heavy computation where results depend on the mercy of computational execution time and the concentrated energy consumption.

This is the point where Keyes and team targeted to change. The Graphics Processing Unit are energy efficient compared to the conventional computer processors because the surplus hardware components do not intervene in the processing. However, customizing a supporting software came forward as a challenge which was taken care of with the help of Ali Charara’s solver design, a Ph.D. student. Taking a reference from Professor Keyes’ words, Ali has gathered a good amount of knowledge from his internship in NVIDIA that finally pinched the trade-off between memory storage and a number of processors.

Hatem Ltaief, a Senior Research Scientist at the team explained that the developed solver does not require extra memory and directly processes information. Explaining simply, the solver converted the sequential column based information to a triangular matrix derived formation. The upgraded solver will soon be added with the NVIDIA GPUs next library. The corresponding research paper is published in the European Conference on Parallel Processing.

Source: Taking graphics cards beyond gaming | KAUST Discovery

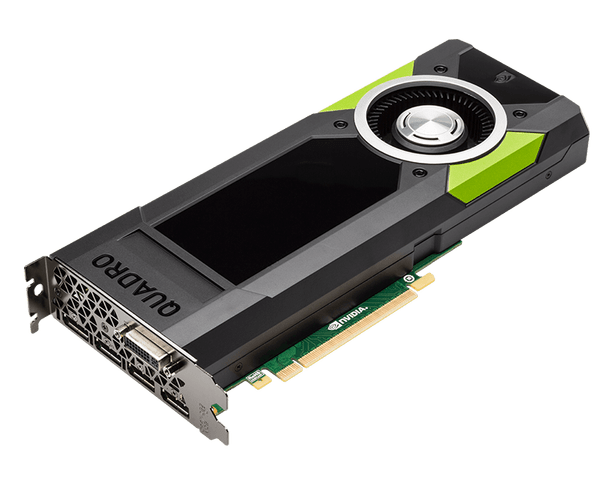

Fair enough? Now putting the philosophy aside, a group of researchers from the KAUST, Extreme Computing Research Center has literally used these graphics cards to solve the kinds of simultaneous equations involving countless variables. According to Professor David Keyes, from the dept. of Applied Mathematics and Computational Science, such compound occurrences appear in statistics, optimization, electrostatics, chemistry, and astronomical calculation where scientists depend on heavy computation where results depend on the mercy of computational execution time and the concentrated energy consumption.

This is the point where Keyes and team targeted to change. The Graphics Processing Unit are energy efficient compared to the conventional computer processors because the surplus hardware components do not intervene in the processing. However, customizing a supporting software came forward as a challenge which was taken care of with the help of Ali Charara’s solver design, a Ph.D. student. Taking a reference from Professor Keyes’ words, Ali has gathered a good amount of knowledge from his internship in NVIDIA that finally pinched the trade-off between memory storage and a number of processors.

Hatem Ltaief, a Senior Research Scientist at the team explained that the developed solver does not require extra memory and directly processes information. Explaining simply, the solver converted the sequential column based information to a triangular matrix derived formation. The upgraded solver will soon be added with the NVIDIA GPUs next library. The corresponding research paper is published in the European Conference on Parallel Processing.

Source: Taking graphics cards beyond gaming | KAUST Discovery

Replies

You are reading an archived discussion.

Related Posts

Early in the month of December, 2016, WhatsApp introduced GIF support and allowed its users to exchange GIF files on Android devices. Now WhatsApp has recently rolled out its latest...

'Hire, Retain, Motivate And Grow People In Your Startup' - Sandeep Mittal, MD - Cartesian Consulting

Being an army-kid, Sandeep Mittal spent most of his childhood days travelling across the country and enjoying the cantonment life to the hilt. After completing his engineering from VIT Pune,...

Hello everyone!

I am a french business school student in Paris and I work part-time as a salesperson in an IT company. I am doing my thesis on the subject...

Opera Software is well known for bringing new revolutionary features like free VPN, built-in ad-blocking and power saving mode to its desktop and mobile browsers. Today it has unveiled a...

Is 2720 all part can you arrange me