Driverless Cars Could Soon 'See' And Identify Its Surroundings - Cambridge Research

A team of researchers from University of Cambridge has come up with two new systems that could revolutionise the tech behind #-Link-Snipped-#. Developed on top of deep learning algorithms, the new technologies help machines in recognising their present location and can be controlled from the comfort of your regular smartphone or camera. The interesting part is that the systems could let a driverless car identify its surroundings even at places where GPS won't work. If put into action, this technology could be pivotal in putting driverless cars into the mainstream in near future.

The SegNet System

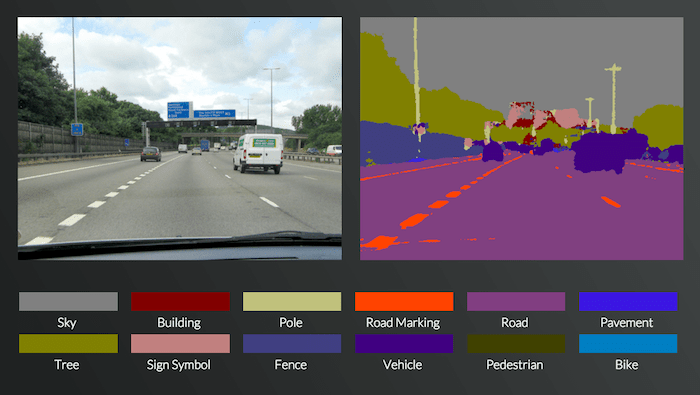

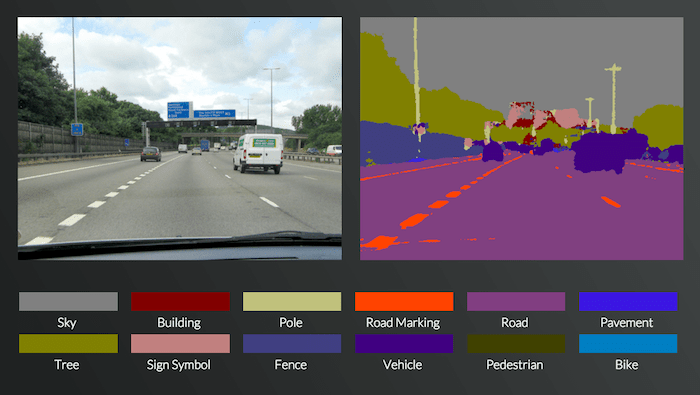

The first technology is named 'SegNet' - a system that can capture a picture of a never-seen-before street and sort it into 12 different categories such as - sky, building, road marking, pedestrian, fence, tree, pavement etc.

The SegNet is capable of functioning at 90% accuracy in different lighting conditions such as afternoon, shadows or night time. The existing technologies which are based on lasers or radars have not been able to achieve this level of accuracy in real time environments.

To demonstrate their research work, the Cambridge engineering team has set up an online portal (#-Link-Snipped-#) that let's you see their technology in action. Users can upload any photograph of their locality and the system will label the components in those 12 categories.

With the radar or LIDAR based sensing systems presently used in driverless cars, the problem is that they are very expensive (they cost more than the car itself). In contrast to that, SegNet operates by intelligent artificial learning techniques. The system has been trained by a group of Cambridge engineers, who manually categorised over 5000 images and taught the system to do it on its own.

Currently, SegNet performs well with urban setups such as highways or motorways. However, in much more complex environments in snowy or desert or rural areas, the system has got a lot left to learn.

While the research team thinks that the system isn't yet ready for controlling driverless cars, it definitely holds potential to replace current anti-collision technologies in passenger cars.

The Localisation System

Once 'SegNet' is able to establish the surroundings for a particular car i.e. "what's around me?", the next big question is "where am I?". To answer that, the researchers came up with a localisation system which determines the machine's current orientation from a single colour image based on its geometry.

The researchers were astonished to find out that their test results showed greater accuracy than the present GPS systems. They tested the system on the King's parade area in central Cambridge and it was successful in identifying the location as well as the car's orientation. A demonstration of the same has been made available #-Link-Snipped-#.

In the following video, you can check out Alex Kendall from University of Cambridge's Department of Engineering explaining how they are teaching machines to see -

The Applications

The next stop for this technology could be domestic robots such as autonomous vacuum cleaner. However, the research team believes that as soon as they begin to completely trust their system, we would have moved really closed to the widespread adoption of cars without drivers.

What are your thoughts about the amazing new research work based on artificial intelligence? Share with us in comments below.

Source: Teaching machines to see: new smartphone-based system could accelerate development of driverless cars | University of Cambridge

The SegNet System

The first technology is named 'SegNet' - a system that can capture a picture of a never-seen-before street and sort it into 12 different categories such as - sky, building, road marking, pedestrian, fence, tree, pavement etc.

The SegNet is capable of functioning at 90% accuracy in different lighting conditions such as afternoon, shadows or night time. The existing technologies which are based on lasers or radars have not been able to achieve this level of accuracy in real time environments.

To demonstrate their research work, the Cambridge engineering team has set up an online portal (#-Link-Snipped-#) that let's you see their technology in action. Users can upload any photograph of their locality and the system will label the components in those 12 categories.

With the radar or LIDAR based sensing systems presently used in driverless cars, the problem is that they are very expensive (they cost more than the car itself). In contrast to that, SegNet operates by intelligent artificial learning techniques. The system has been trained by a group of Cambridge engineers, who manually categorised over 5000 images and taught the system to do it on its own.

Currently, SegNet performs well with urban setups such as highways or motorways. However, in much more complex environments in snowy or desert or rural areas, the system has got a lot left to learn.

While the research team thinks that the system isn't yet ready for controlling driverless cars, it definitely holds potential to replace current anti-collision technologies in passenger cars.

The Localisation System

Once 'SegNet' is able to establish the surroundings for a particular car i.e. "what's around me?", the next big question is "where am I?". To answer that, the researchers came up with a localisation system which determines the machine's current orientation from a single colour image based on its geometry.

The researchers were astonished to find out that their test results showed greater accuracy than the present GPS systems. They tested the system on the King's parade area in central Cambridge and it was successful in identifying the location as well as the car's orientation. A demonstration of the same has been made available #-Link-Snipped-#.

In the following video, you can check out Alex Kendall from University of Cambridge's Department of Engineering explaining how they are teaching machines to see -

The Applications

The next stop for this technology could be domestic robots such as autonomous vacuum cleaner. However, the research team believes that as soon as they begin to completely trust their system, we would have moved really closed to the widespread adoption of cars without drivers.

What are your thoughts about the amazing new research work based on artificial intelligence? Share with us in comments below.

Source: Teaching machines to see: new smartphone-based system could accelerate development of driverless cars | University of Cambridge

Replies

You are reading an archived discussion.

Related Posts

A new error status code of the HTTP protocol has been approved for publication by the Internet Engineering Steering Group (IESG) that lets an internet user know when a webpage...

M new on this crazyengineers n actually m nervous 😛 b'coz i donno any1 here

I was searching some topics randomly. The work of developer is really appreciable. It's interesting to search here.

Researchers from Massachusetts Institute of Technology have devised a technique that can trap hard-to-detect molecules which include rare proteins and viruses using nanotubes in a “forest” like arrangement. Engineers have...

Chinese company Gionee has taken wraps off the Marathon M5 successor with its power packed Gionee Marathon M5 Plus, listed on the company's official e-commerce portal for China. Priced at...